Virtual machine in Rust type resolution

Let's implement a virtual machine (VM) in the Rust type system! Why? ... Let's move on to more pressing matters.

I took a first stab at this recently when designing a challenge for björn CTF 2025. The full code for that challenge is available here.

The final version is simpler than some of the earlier iterations and ideas I've had: doing interesting things often led to hitting rustc's recursion limit, or simply to a very slow compilation process, where I would stare at rustc doing something for minutes without any indication of its progress.

So let's try again, leaving behind the format of a CTF challenge. Let's also establish couple of requirements (and related questions we'll need to answer) for a VM to actually be a VM and to also be somewhat useful and capable of executing a "program":

- Execution and Control Flow: what is the instruction set? How does the VM perform a single step? How does it branch based on data?

- Output: how can we tell what the VM did?

- Input: how can the user provide data for the program?

In addition, this should all happen in the Rust type system, i.e., the execution of the VM should happen during the compilation of a Rust program.

Execution

Before we decide on an instruction set for our VM, let's think about what a program will even look like. Unsurprisingly, a program will be represented by a (very particular) type. How do we write it? How do we sequence instructions? How does rustc "execute" them in the correct order?

Recursive associated types

We can make use of traits and in particular associated types. A neat way to think about these is as functions mapping a type (the one implementing the trait) to another type (the associated type). In its most basic form this seems unassuming:

trait SomeTrait {

type Assoc;

}

impl SomeTrait for i32 {

type Assoc = bool;

}

Here the type i32 is "mapped" to bool by using SomeTrait::Assoc. Where this gets interesting (for us, anyway) is when the impl block itself is generic:

impl<T> SomeTrait for (i32, T) {

type Assoc = T;

}

Here, any tuple of the shape (i32, T) is "mapped" to T. But we don't have to stop there: the impl block is generic, so of course it can also have trait bounds on its parameters:

impl<T: SomeTrait> SomeTrait for (i32, T) {

type Assoc = <T as SomeTrait>::Assoc;

}

This mapping is now recursive, in that evaluating SomeTrait::Assoc for our tuple requires the compiler to further evaluate SomeTrait::Assoc for the inner type.

Type lists

The choice of a tuple type was not arbitrary. If you are familiar with Lisp or similar, the next step should not surprise you: we can nest these tuples as deeply as we want, creating a "linked list" of types. Let's suppose our VM supports two operations, Foo and Bar.

struct Foo;

struct Bar;

trait VMStep {

type Next;

}

impl<T: VMStep> VMStep for (Foo, T) {

type Next = <T as VMStep>::Next;

}

impl<T: VMStep> VMStep for (Bar, T) {

type Next = <T as VMStep>::Next;

}

impl VMStep for () {

type Next = ();

}

Note the last impl block is there to make sure the end of a list is still "executable" by mapping () to itself. We can finally write a program!

type Program = (Foo, (Bar, (Foo, (Foo, ()))));

Manipulating data and const generics

The program currently does nothing at all: both of our two instructions are no-ops. But of course, there is no data for the program to do anything with! As with the program itself, we will need to somehow embed the state of the executing program into a type. To begin with, let's try to use const generics. This changes our representation of a program slightly, from a tuple to a struct, because we cannot make const generics be part of a tuple type. For simplicity, the entire memory of the program will be a single unsigned 8-bit integer:

struct<const M: u8, T: VMStep> ProgramState(T);

We can then change the VMStep implementation to operate on ProgramState instances:

impl<const M: u8, T: VMStep> VMStep for ProgramState<M, (Foo, T)> {

type Next = ProgramState<{M + 1}, <T as VMStep>::Next>;

}

impl<const M: u8, T: VMStep> VMStep for ProgramState<M, (Bar, T)> {

type Next = ProgramState<{M - 1}, <T as VMStep>::Next>;

}

impl<const M: u8> VMStep for ProgramState<M, ()> {

type Next = ();

}

The funky {M + 1} is rustc's suggestion to stuff a const expression into a generic but... This still does not work!

error: generic parameters may not be used in const operations

--> vm.rs:2:31

|

2 | type Next = ProgramState<{M + 1}, <T as VMStep>::Next>;

| ^ cannot perform const operation using `M`

|

= help: const parameters may only be used as standalone arguments here, i.e. `M`

Unfortunately, it seems we cannot rely on rustc to do const evaluation for us.

Bits and bytes

Since const generics are out, we are back to using our own types to represent data. Defining Boolean logic operations with types, traits, and associated types is quite simple:

struct T; // true, one

struct F; // false, zero

struct BitPair<A, B>(A, B);

trait Not { type Result; }

impl Not for F { type Result = T; } // not 0 == 1

impl Not for T { type Result = F; } // not 1 == 0

trait And { type Result; }

impl And for BitPair<F, F> { type Result = F; } // 0 and 0 == 0

impl And for BitPair<F, T> { type Result = F; } // 0 and 1 == 0

impl And for BitPair<T, F> { type Result = F; } // 1 and 0 == 0

impl And for BitPair<T, T> { type Result = T; } // 1 and 1 == 1

// etc for Or, Xor

(You might be wondering why the binary operation is defined on a BitPair instead of simply using a tuple. Put a pin in that.)

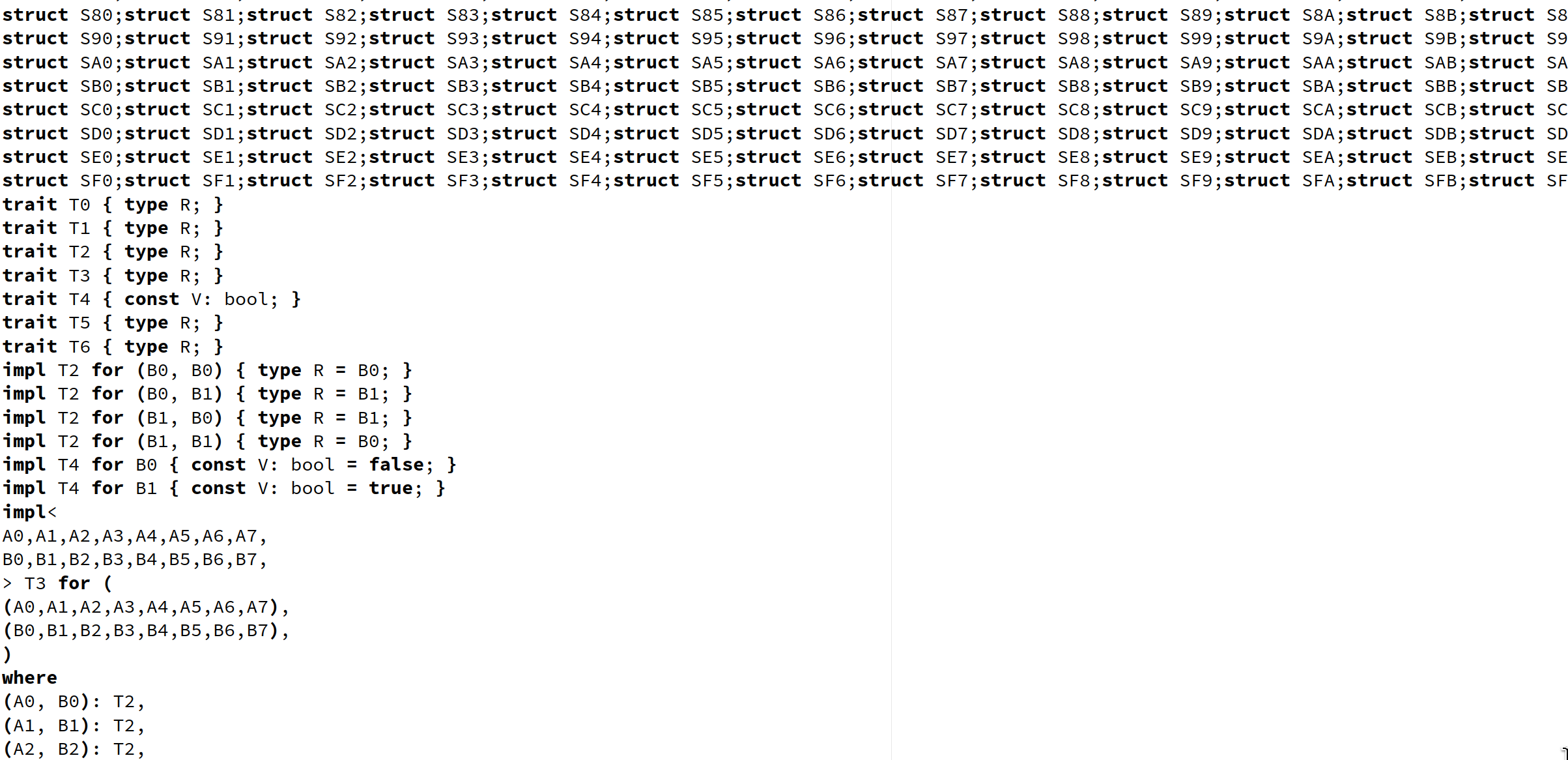

Extending this type of logic to 256 types, representing each possible value of a byte is possible but instantly leads to a hit to compilation performance: every binary operation has 65536 impl blocks. We can sidestep the issue for bitwise operations by defining rules to convert between types representing bytes and types representing bits, for which we need "only" 512 impl blocks:

struct B00;

struct B01;

// ...

struct BFF;

struct BytePair<A, B>(A, B);

trait Convert { type Result; }

// conversion from bytes to bits ...

impl Convert for B00 { type Result = (F, F, F, F, F, F, F, F); }

impl Convert for B01 { type Result = (F, F, F, F, F, F, F, T); }

// ...

impl Convert for BFF { type Result = (T, T, T, T, T, T, T, T); }

// ... and vice versa

impl Convert for (F, F, F, F, F, F, F, F) { type Result = B00; }

impl Convert for (F, F, F, F, F, F, F, T) { type Result = B01; }

// ...

impl Convert for (T, T, T, T, T, T, T, T) { type Result = BFF; }

The bitwise operations can then be defined generically like so:

impl<

A0, A1, A2, A3, A4, A5, A6, A7,

B0, B1, B2, B3, B4, B5, B6, B7,

A: Convert<Result = (A7, A6, A5, A4, A3, A2, A1, A0)>,

B: Convert<Result = (B7, B6, B5, B4, B3, B2, B1, B0)>,

> And for BytePair<A, B>

where

BitPair<A0, B0>: And,

BitPair<A1, B1>: And,

BitPair<A2, B2>: And,

BitPair<A3, B3>: And,

BitPair<A4, B4>: And,

BitPair<A5, B5>: And,

BitPair<A6, B6>: And,

BitPair<A7, B7>: And,

(

<BitPair<A0, B0> as And>::Result,

<BitPair<A1, B1> as And>::Result,

<BitPair<A2, B2> as And>::Result,

<BitPair<A3, B3> as And>::Result,

<BitPair<A4, B4> as And>::Result,

<BitPair<A5, B5> as And>::Result,

<BitPair<A6, B6> as And>::Result,

<BitPair<A7, B7> as And>::Result,

): Convert,

{

type Result = <(

<BitPair<A0, B0> as And>::Result,

<BitPair<A1, B1> as And>::Result,

<BitPair<A2, B2> as And>::Result,

<BitPair<A3, B3> as And>::Result,

<BitPair<A4, B4> as And>::Result,

<BitPair<A5, B5> as And>::Result,

<BitPair<A6, B6> as And>::Result,

<BitPair<A7, B7> as And>::Result,

) as Convert>::Result;

}

(Back to the BitPair and BytePair thing: the above impl block causes a requirement evaluation overflow in rustc if we use tuples. The issue is that the impl block itself would apply to any tuple before considering the trait constraints. Thus, evaluating the Result type for the individual bits does not go directly to the rules we defined for stand-alone bits as intended, but also tries to use the newly defined rule for bytes. The where clause then needs to be evaluated... causing it to look at the bit and byte pair rules again... etc.)

Still quite verbose, thanks in part to that somewhat redundant-looking where clause... but it works!

Half adders and adders

But how do we do real arithmetic with our data? How do we subtract, multiply, or divide numbers? Defining the full addition operation for two arbitrary bytes (as we did above for And) would be very verbose without some additional definitions.

Enter the world of logic circuits and in particular half adders! A half adder is a circuit that basically "adds" two bits, in isolation. Similarly to primary-school digit-by-digit addition, the addition of two bits may result in a carry that will then need to be propagated to the next more significant bit position.

trait HalfAdd {

type Result;

type Carry;

}

impl HalfAdd for BitPair<F, F> { type Result = F; type Carry = F; }

impl HalfAdd for BitPair<F, T> { type Result = T; type Carry = F; }

impl HalfAdd for BitPair<T, F> { type Result = T; type Carry = F; }

impl HalfAdd for BitPair<T, T> { type Result = F; type Carry = T; }

A full adder is then a circuit that effectively adds three bits: the two input bits and the carry bit coming from the previous operation.

struct BitTriple<A, B, C>(A, B, C);

trait FullAdd {

type Result;

type Carry;

}

impl FullAdd for BitTriple<F, F, F> { type Result = F; type Carry = F; }

impl FullAdd for BitTriple<F, F, T> { type Result = T; type Carry = F; }

impl FullAdd for BitTriple<F, T, F> { type Result = T; type Carry = F; }

impl FullAdd for BitTriple<F, T, T> { type Result = F; type Carry = T; }

impl FullAdd for BitTriple<T, F, F> { type Result = T; type Carry = F; }

impl FullAdd for BitTriple<T, F, T> { type Result = F; type Carry = T; }

impl FullAdd for BitTriple<T, T, F> { type Result = F; type Carry = T; }

impl FullAdd for BitTriple<T, T, T> { type Result = T; type Carry = T; }

Here then is the addition of two bytes, in all of its glory, using our half adder and full adder:

trait Add { type Result; }

impl<

A0, A1, A2, A3, A4, A5, A6, A7,

B0, B1, B2, B3, B4, B5, B6, B7,

A: Convert<Result = (A7, A6, A5, A4, A3, A2, A1, A0)>,

B: Convert<Result = (B7, B6, B5, B4, B3, B2, B1, B0)>,

> Add for BytePair<A, B>

where

BitPair<A0, B0>: HalfAdd,

BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry>: FullAdd,

BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry>: FullAdd,

BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry>: FullAdd,

BitTriple<A4, B4, <BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry>: FullAdd,

BitTriple<A5, B5, <BitTriple<A4, B4, <BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry>: FullAdd,

BitTriple<A6, B6, <BitTriple<A5, B5, <BitTriple<A4, B4, <BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry>: FullAdd,

BitTriple<A7, B7, <BitTriple<A6, B6, <BitTriple<A5, B5, <BitTriple<A4, B4, <BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry>: FullAdd,

(

<BitTriple<A7, B7, <BitTriple<A6, B6, <BitTriple<A5, B5, <BitTriple<A4, B4, <BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Result,

<BitTriple<A6, B6, <BitTriple<A5, B5, <BitTriple<A4, B4, <BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Result,

<BitTriple<A5, B5, <BitTriple<A4, B4, <BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Result,

<BitTriple<A4, B4, <BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Result,

<BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Result,

<BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Result,

<BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Result,

<BitPair<A0, B0> as HalfAdd>::Result,

): Convert,

{

type Result = <(

<BitTriple<A7, B7, <BitTriple<A6, B6, <BitTriple<A5, B5, <BitTriple<A4, B4, <BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Result,

<BitTriple<A6, B6, <BitTriple<A5, B5, <BitTriple<A4, B4, <BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Result,

<BitTriple<A5, B5, <BitTriple<A4, B4, <BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Result,

<BitTriple<A4, B4, <BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Result,

<BitTriple<A3, B3, <BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Result,

<BitTriple<A2, B2, <BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Carry> as FullAdd>::Result,

<BitTriple<A1, B1, <BitPair<A0, B0> as HalfAdd>::Carry> as FullAdd>::Result,

<BitPair<A0, B0> as HalfAdd>::Result,

) as Convert>::Result;

}

This looks intimidating, but mostly just because we can't give shorter names to components that we keep using again and again. The least significant bit of the result is the result of the half adder applied to the least significant bits of the input. The carry of the half adder is then used in the full adder for the next bit, and so on.

Multiplication and division circuits exist, of course (they are in your processor right now!) but let's stick to what we have.

An instruction set without branching

Let's wrap up what we have for now into a tiny instruction set. The type list representation of programs doesn't allow us to do any loops or branching (yet); we'll tackle that a bit later still. Our first VM will have a state of:

- two 8-bit scratch registers,

AandB; - a stack of 8-bit values; and

- the remaining program to execute.

And the instruction set:

- addition and bitwise operations between registers;

- push, pop operations on the stack; and

- some limited stack juggling.

Here it is:

// arithmetic, register manipulation

struct IncA;

struct DecA;

struct AddAB;

struct NotA;

struct AndAB;

struct XorAB;

struct OrAB;

struct SwapAB;

// stack-register interaction

struct PushA;

struct PushB;

struct PopA;

struct PopB;

// stack manipulation

struct Pop;

struct SwapTop;

struct ProgramState<A, B, S, T>(A, B, S, T);

trait VMStep {

type Next;

}

impl<A, B, S, T> VMStep for ProgramState<A, B, S, (IncA, T)>

where BytePair<A, B01>: Add,

ProgramState<<BytePair<A, B01> as Add>::Result, B, S, T>: VMStep,

{

type Next = <ProgramState<<BytePair<A, B01> as Add>::Result, B, S, T> as VMStep>::Next;

}

impl<A, B, S, T> VMStep for ProgramState<A, B, S, (DecA, T)>

where BytePair<A, BFF>: Add,

ProgramState<<BytePair<A, BFF> as Add>::Result, B, S, T>: VMStep,

{

type Next = <ProgramState<<BytePair<A, BFF> as Add>::Result, B, S, T> as VMStep>::Next;

}

impl<A, B, S, T> VMStep for ProgramState<A, B, S, (AddAB, T)>

where BytePair<A, B>: Add,

ProgramState<<BytePair<A, B> as Add>::Result, B, S, T>: VMStep,

{

type Next = <ProgramState<<BytePair<A, B> as Add>::Result, B, S, T> as VMStep>::Next;

}

impl<A, B, S, T> VMStep for ProgramState<A, B, S, (NotA, T)>

where A: Not,

ProgramState<<A as Not>::Result, B, S, T>: VMStep,

{

type Next = <ProgramState<<A as Not>::Result, B, S, T> as VMStep>::Next;

}

impl<A, B, S, T> VMStep for ProgramState<A, B, S, (AndAB, T)>

where BytePair<A, B>: And,

ProgramState<<BytePair<A, B> as And>::Result, B, S, T>: VMStep,

{

type Next = <ProgramState<<BytePair<A, B> as And>::Result, B, S, T> as VMStep>::Next;

}

impl<A, B, S, T> VMStep for ProgramState<A, B, S, (XorAB, T)>

where BytePair<A, B>: Xor,

ProgramState<<BytePair<A, B> as Xor>::Result, B, S, T>: VMStep,

{

type Next = <ProgramState<<BytePair<A, B> as Xor>::Result, B, S, T> as VMStep>::Next;

}

impl<A, B, S, T> VMStep for ProgramState<A, B, S, (OrAB, T)>

where BytePair<A, B>: Or,

ProgramState<<BytePair<A, B> as Or>::Result, B, S, T>: VMStep,

{

type Next = <ProgramState<<BytePair<A, B> as Or>::Result, B, S, T> as VMStep>::Next;

}

impl<A, B, S, T> VMStep for ProgramState<A, B, S, (SwapAB, T)>

where ProgramState<B, A, S, T>: VMStep,

{

type Next = <ProgramState<B, A, S, T> as VMStep>::Next;

}

impl<A, B, S, T> VMStep for ProgramState<A, B, S, (PushA, T)>

where ProgramState<A, B, (A, S), T>: VMStep,

{

type Next = <ProgramState<A, B, (A, S), T> as VMStep>::Next;

}

impl<A, B, S, T> VMStep for ProgramState<A, B, S, (PushB, T)>

where ProgramState<A, B, (B, S), T>: VMStep,

{

type Next = <ProgramState<A, B, (B, S), T> as VMStep>::Next;

}

impl<A, B, H, S, T> VMStep for ProgramState<A, B, (H, S), (PopA, T)>

where ProgramState<H, B, S, T>: VMStep,

{

type Next = <ProgramState<H, B, S, T> as VMStep>::Next;

}

impl<A, B, H, S, T> VMStep for ProgramState<A, B, (H, S), (PopB, T)>

where ProgramState<A, H, S, T>: VMStep,

{

type Next = <ProgramState<A, H, S, T> as VMStep>::Next;

}

impl<A, B, H, S, T> VMStep for ProgramState<A, B, (H, S), (Pop, T)>

where ProgramState<A, B, S, T>: VMStep,

{

type Next = <ProgramState<A, B, S, T> as VMStep>::Next;

}

impl<A, B, H1, H2, S, T> VMStep for ProgramState<A, B, (H1, (H2, S)), (SwapTop, T)>

where ProgramState<A, B, (H2, (H1, S)), T>: VMStep,

{

type Next = <ProgramState<A, B, (H2, (H1, S)), T> as VMStep>::Next;

}

impl<A, B, S> VMStep for ProgramState<A, B, S, ()> {

type Next = ProgramState<A, B, S, ()>;

}

Output

So... by resolving types we are executing something, but how do we know any of it actually works? How do we get the final type, say, the value in the A register, and output it to the user?

Here we can use another trait feature: associated constants.

trait Valued { const VALUE: u8; }

impl Valued for B00 { const VALUE: u8 = 0x00; }

impl Valued for B01 { const VALUE: u8 = 0x01; }

// ...

impl Valued for BFF { const VALUE: u8 = 0xFF; }

impl<A: Valued, B, S> Valued for ProgramState<A, B, S, ()> {

const VALUE: u8 = <A as Valued>::VALUE; // extract register A

}

Our first program

Without loops or conditional execution, we can't do much! Nevertheless, here is a program that adds the numbers 1, 2, ..., 8 (rather inefficiently by using the stack!), and halts with the total sum in the A register:

type Program = ProgramState::<B00, B00, (),

(IncA, (PushA,

(IncA, (PushA,

(IncA, (PushA,

(IncA, (PushA,

(IncA, (PushA,

(IncA, (PushA,

(IncA, (PushA,

(IncA, (PushA,

(SwapAB,

(PopB, (AddAB,

(PopB, (AddAB,

(PopB, (AddAB,

(PopB, (AddAB,

(PopB, (AddAB,

(PopB, (AddAB,

(PopB, (AddAB,

(PopB, (AddAB,

())))))))))))))))))))))))))))))))))>;

(Hope you like parentheses!)

And to, finally, execute it:

fn main() {

println!("output: 0x{:02x}", <<Program as VMStep>::Next as Valued>::VALUE);

}

Compiling with rustc vm.rs and running ./vm we get...

output: 0x24

Which is correct!

Input

Normally, VMs with input instructions can query the user for more data during execution. This poses a problem for us: how would we interrupt rustc's typing to, say, read data from the terminal? We could perhaps do this by writing a custom rustc driver, hijacking some type resolution callback to wait for user input... But then our VM is not implemented purely in the Rust type system anymore.

So let's declare this impossible without external tools and move on. Any and all "input" will be provided by the user ahead of time (prior to executing the program), for example in the stack in the initial program state. So, if the input is "hello", the initial program state might be:

type Input = (B68, (B65, (B6C, (B6C, (B6F, ()))))); // bytes of "hello"

type Program = ProgramState::<

B00,

B00,

Input,

/* instructions */

>;

Control flow

Finally, the big question: how do we implement conditional jumps and loops? Always executing the same set of instructions, in the same order, does not make for very interesting programs. Let's split this into three separate problems:

- How do we execute different steps based on data?

- How do we jump ahead in a program, skipping over some instructions?

- How do we jump backwards in a program, such that we can execute loops?

Branching on data

This problem is relatively easy to address. Up to now, each of our instructions had a single impl block, defining its behaviour for any valid program state. But with the appropriate choice of constraints, we can write multiple impl blocks (without violating Rust's trait coherence rules)!

Let's consider the instruction PushIfANonZero, which pushes the value of A onto the stack, but only if it is non-zero:

struct PushAIfNonZero;

It is very easy to run into issues here. For example, the following rules cause rustc to hang1:

impl<

A: Convert<Result = (F, F, F, F, F, F, F, F)>,

B, S, T,

> VMStep for ProgramState<A, B, S, (PushAIfNonZero, T)>

where ProgramState<A, B, S, T>: VMStep,

{

type Next = <ProgramState<A, B, S, T> as VMStep>::Next;

}

impl<

A: Convert<Result = (F, F, F, F, F, F, F, T)>,

B, S, T,

> VMStep for ProgramState<A, B, S, (PushAIfNonZero, T)>

where ProgramState<A, B, S, T>: VMStep,

{

type Next = <ProgramState<A, B, S, T> as VMStep>::Next;

}

Regardless, the two impl blocks are still considered overlapping implementations, probably because the constraint on Convert::Result is not taken into account by rustc early enough. The solution here seems to be to make sure the impl blocks are clearly not overlapping (from the compiler's perspective) by referring to types directly rather than using the Convert::Result indirection. These two rules are fine:

impl<B, S, T> VMStep for ProgramState<B00, B, S, (PushAIfNonZero, T)>

where ProgramState<B00, B, S, T>: VMStep,

{

type Next = <ProgramState<B00, B, S, T> as VMStep>::Next;

}

impl<B, S, T> VMStep for ProgramState<B01, B, S, (PushAIfNonZero, T)>

where ProgramState<B01, B, (B01, S), T>: VMStep,

{

type Next = <ProgramState<B01, B, (B01, S), T> as VMStep>::Next;

}

If we wanted this kind of rule to work for any value of the A register, we would need 256 different impl blocks. Fortunately, the instruction we actually care about is a conditional jump, and we can simply require the instruction to only be valid if the value of the A register is either 0 or 1.

Jumping ahead

Now let's switch tracks: how do we jump forward in the program, to a specific point? Typically, a VM might include a (conditional) jump instruction that jumps to a particular address, or perhaps the jump is relative to the current instruction's address. Let's implement the latter.

Ideally, when seeing a "jump forward three steps" instruction, we could directly discard the intermediate instructions. However, with the representation of type lists using tuples, this is a bit impractical: we can only write impl block rules to match a statically known number of instructions.

If we don't need to support indirect jumps (where the target address is found in a register), then we can indeed enumerate 1-byte jumps:

struct JumpForward<T>(T);

impl<A, B, S, T> VMStep for ProgramState<A, B, S, (JumpForward<B00>, T)>

where ProgramState<A, B, S, T>: VMStep,

{

type Next = <ProgramState<A, B, S, T> as VMStep>::Next;

}

impl<A, B, S, T, T1> VMStep for ProgramState<A, B, S, (JumpForward<B01>, (T1, T))>

where ProgramState<A, B, S, T>: VMStep,

{

type Next = <ProgramState<A, B, S, T> as VMStep>::Next;

}

impl<A, B, S, T, T1, T2> VMStep for ProgramState<A, B, S, (JumpForward<B02>, (T1, (T2, T)))>

where ProgramState<A, B, S, T>: VMStep,

{

type Next = <ProgramState<A, B, S, T> as VMStep>::Next;

}

// etc

Combining this with the previous section, we can have a conditional jump:

struct JumpForwardIfA<T>(T);

impl<X, B, S, T> VMStep for ProgramState<B00, B, S, (JumpForwardIfA<X>, T)>

where ProgramState<B00, B, S, T>: VMStep,

{

type Next = <ProgramState<B00, B, S, T> as VMStep>::Next;

}

impl<B, S, T> VMStep for ProgramState<B01, B, S, (JumpForwardIfA<B00>, T)>

where ProgramState<B01, B, S, T>: VMStep,

{

type Next = <ProgramState<B01, B, S, T> as VMStep>::Next;

}

impl<B, S, T, T1> VMStep for ProgramState<B01, B, S, (JumpForwardIfA<B01>, (T1, T))>

where ProgramState<B01, B, S, T>: VMStep,

{

type Next = <ProgramState<B01, B, S, T> as VMStep>::Next;

}

// etc

Jumping backwards

To conclude our basic VM instruction set, let's add backwards jumps, enabling us to write programs with loops. In the rules above, we discard the instructions that have been executed. If we want to jump backwards, we need the entire program to stick around.

Changing our program state representation a bit, we can have the list of instructions to be executed, as before, in addition to another list: the list of instructions we have already executed, in reverse order. The initial

type Program = ProgramState::<

B00, // register A

B00, // register B

Input, // stack (pre-filled with user input)

(), // previous instructions (empty)

/* instructions */, // future instructions

>;

Any rule which processes an instruction from the program will now add that instruction into the backwards list:

impl<A, B, S, P, T> VMStep for ProgramState<A, B, S, P, (IncA, T)>

where BytePair<A, B01>: Add,

ProgramState<<BytePair<A, B01> as Add>::Result, B, S, (IncA, P), T>: VMStep,

{

type Next = <ProgramState<<BytePair<A, B01> as Add>::Result, B, S, (IncA, P), T> as VMStep>::Next;

}

(The data structure we implemented here is in fact a zipper for lists.)

A backwards jump can then make use of our backwards list:

struct JumpBackward<T>(T);

impl<A, B, S, P, P1, T> VMStep for ProgramState<A, B, S, (P1, P), (JumpBackward<B01>, T)>

where ProgramState<A, B, S, P, (P1, (JumpBackward<B01>, T))>: VMStep,

{

type Next = <ProgramState<A, B, S, P, (P1, (JumpBackward<B01>, T))> as VMStep>::Next;

}

// etc

Loopy program

Let's rewrite the previous program using a loop:

type Program = ProgramState::<B00, B00, (), (),

(PushA,

(SetA<B08>, (PushA,

// loop head

(PopA, (PushA, (SetB<B00>,

(EqAB,

(JumpForwardIfA<B09 /* to end */>,

// loop body

(PopA, (PopB,

(DecA, (PushA, (IncA,

(AddAB,

(PushA,

(SwapTop,

(JumpBackward<B0D /* to loop head */>,

// end

(PopA, (PopA,

())))))))))))))))))))>;

And... rustc is not happy with this! The error we get is a cryptic "overflow evaluating the requirement ..." that does not point to anything actually related to this program or any of the new looping instructions.

After much debugging (see below), it turns out it is in fact the compiler that's wrong here. Or, you know, it simply wasn't built for VMs embedded in its type system. But it turns out there is a new trait solver in development for rustc! We can enable it in a nightly compiler build with the command-line argument -Znext-solver.

With this change the program above still doesn't compile, but this time the issue is simple to fix, and understandable: the compiler ran out of patience trying to resolve the type. This is something we can change by increasing the recursion limit:

#![recursion_limit = "256"]

And thus, finally:

$ rustc -Znext-solver v06.rs

$ ./v06

output: 0x24

(With this version it is easy to change the B08 to change how many numbers we're adding up.)

Wrapping up

That's about it for now! The instruction set is very limited but it is in fact Turing complete. It could have been simpler. It could have been a stack machine, forgoing registers altogether. It could have been a brainf**k-like, forgoing most of the other instructions or redability. Still, this struck me as a nice balance and not too far removed from some actual instruction sets.

As it is, this suffices to show: trait resolution in Rust2 is Turing complete!

Development

Developing and especially debugging this VM was not very straightforward. Some mistakes in the impl definitions would result in rustc complaining about overlapping implementations, but these were the easy cases where I had, for example, defined the rule for the same instruction twice (or defined the rule for a new instruction but forgot to change the implementor). The worse, far more numerous, cases were ones where the impl blocks were fine as far as rustc was concerned, but then trying to "execute" a valid program would lead to a cryptic error, usually something like "overflow evaluating the requirement BytePair<_, BFF>: Add", even though the error in question had little to do with addition.

Some issues were caught with small unit tests:

trait RegA { type Result; }

impl<A, B, S, P> RegA for ProgramState<A, B, S, P, ()> {

type Result = A;

}

let v: <<ProgramState<B00, B00, (), (), (SetA<BFF>, (SetB<B01>, ()))> as VMStep>::Next as RegA>::Result;

let _: BFF = v;

This is essentially a compile-time assertion that the resolved type, i.e., the value in the A register at the end of execution, is BFF. If the execution results in a different value, the resulting error is pretty readable:

error[E0308]: mismatched types

--> vm.rs:865:18

|

865 | let _: BFF = v;

| --- ^ expected `BFF`, found `BAA`

| |

| expected due to this

Making programs with loops work was particularly annoying. The backwards jump instruction worked fine in isolation, in programs without loops (in programs where we jump forward, then back, then forward, without ever retracing the same instructions). However, as soon as an actual loop (even one which would never be taken) was introduced into the program, rustc would raise the dreaded overflow error.

At this point I reached for Argus, a tool to debug trait-related errors. By itself it didn't help since the error still happened without Argus showing any error trace, but after talking to Gavin (the author), we figured out that this was because the current trait solver was having issues whereas the new one (used by Argus) worked just fine. Thanks again, Gavin!

"Runtime" errors

When the VM runs into an error during its execution, this in turn causes a rustc compilation error (expectably cryptic). For example, attempting to execute JumpForwardIfA when the A register is neither B00 nor B01. Outside of tools like Argus, I haven't found much of a way to tackle this other than staring at the verbose errors and trying to figure out which rule might have not matched when it should have, or what the state was at a particular step by applying rules manually and simplifying the failing case as much as possible.

Final code

The final version of the VM is available on GitHub. I took some time to document the code, split it up into more navigable files, and clean up repetition where possible with macros. Enjoy!(?)